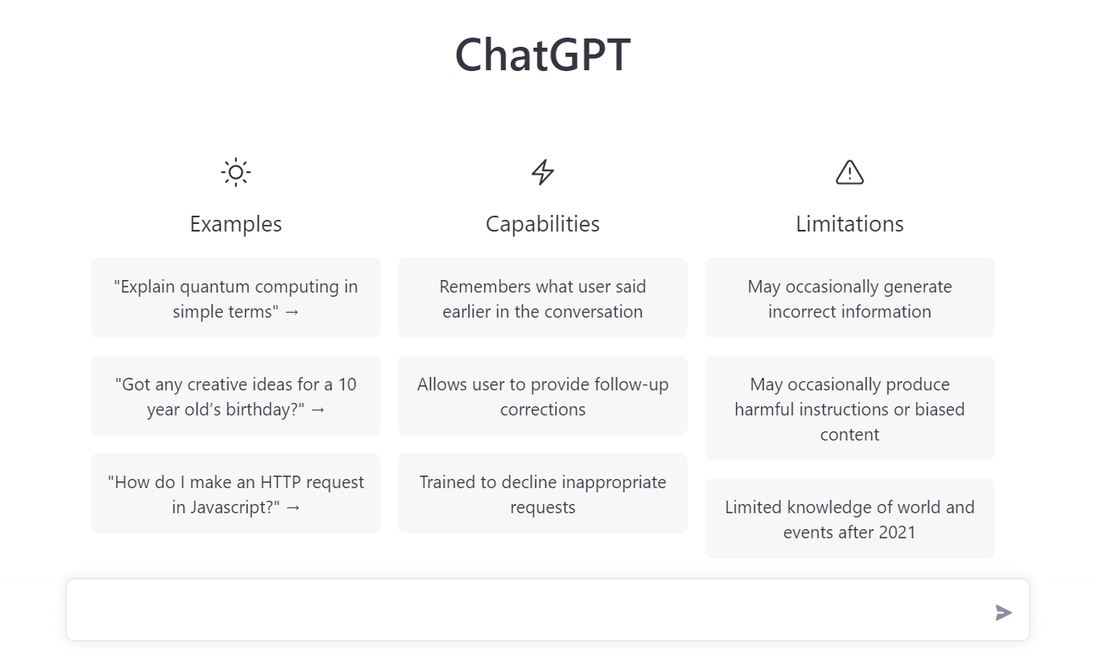

One Wharton professor lately fed the chatbot the ultimate examination questions for a core MBA course and located that, regardless of some shocking math errors, he would have given it a B or a B-minus in the class.

And but, not all educators are shying away from the bot.

This yr, Mollick just isn’t solely permitting his college students to make use of ChatGPT, they’re required to. And he has formally adopted an A.I. coverage into his syllabus for the primary time.

He teaches courses in entrepreneurship and innovation, and mentioned the early indications have been the transfer was going nice.

“The reality is, I most likely could not have stopped them even when I did not require it,” Mollick mentioned.

This week he ran a session the place college students have been requested to provide you with concepts for his or her class undertaking. Virtually everybody had ChatGPT operating and have been asking it to generate initiatives, after which they interrogated the bot’s concepts with additional prompts.

“And the concepts to date are nice, partially on account of that set of interactions,” Mollick mentioned.

He readily admits he alternates between enthusiasm and nervousness about how synthetic intelligence can change assessments within the classroom, however he believes educators want to maneuver with the instances.

“We taught folks methods to do math in a world with calculators,” he mentioned. Now the problem is for educators to show college students how the world has modified once more, and the way they will adapt to that.

Mollick’s new coverage states that utilizing A.I. is an “rising ability”; that it may be fallacious and college students ought to verify its outcomes in opposition to different sources; and that they are going to be liable for any errors or omissions offered by the software.

And, maybe most significantly, college students have to acknowledge when and the way they’ve used it.

“Failure to take action is in violation of educational honesty insurance policies,” the coverage reads.

Mollick is not the primary to attempt to put guardrails in place for a post-ChatGPT world.

Earlier this month, 22-year-old Princeton scholar Edward Tian created an app to detect if something had been written by a machine. Named GPTZero, it was so in style that when he launched it, the app crashed from overuse.

“People should know when one thing is written by a human or written by a machine,” Tian advised NPR of his motivation.

Mollick agrees, however is not satisfied that educators can ever actually cease dishonest.

He cites a survey of Stanford students that discovered many had already used ChatGPT of their remaining exams, and he factors to estimates that 1000’s of individuals in locations like Kenya are writing essays on behalf of students abroad.

“I feel everyone is dishonest … I imply, it is occurring. So what I am asking college students to do is simply be sincere with me,” he mentioned. “Inform me what they use ChatGPT for, inform me what they used as prompts to get it to do what they need, and that is all I am asking from them. We’re in a world the place that is occurring, however now it is simply going to be at a fair grander scale.”

“I do not assume human nature adjustments on account of ChatGPT. I feel functionality did.”

9(MDAxOTAwOTE4MDEyMTkxMDAzNjczZDljZA004))

Source link